Fitness / Motivation / Technology & A.I / Crypto

Welcome to Edition 133 of the Powerbuilding Digital Newsletter—where consistency turns into capability and awareness becomes advantage. This space is built for those who understand that real progress is layered: physical strength, mental discipline, and the ability to adapt in a world that’s constantly evolving.

Each week is an opportunity to refine—not reinvent. To sharpen what works, remove what doesn’t, and keep building forward with clarity and purpose. That’s the mindset behind this edition.

Here’s what we’re focused on this week:

- Fitness Info & Ideas

Strength training approaches and recovery insights designed to help you stay consistent, avoid setbacks, and build performance that lasts. - Motivation & Wellbeing

Clear thinking, steady discipline, and practical habits that support resilience and long-term mental balance. - Technology & AI Trends

Key developments in AI and digital tools that are shaping productivity, creativity, and how individuals create leverage in the modern world. - Crypto & Digital Asset Trends

Real-world blockchain use cases, platforms, and innovations that continue to expand the utility of decentralized systems.

Edition 133 is about refinement through repetition—showing up, making adjustments, and continuing to move forward with intent. Stay consistent. Stay aware. Keep building.

Disclaimer:

The information provided in the Powerbuilding Digital Newsletter is for educational and informational purposes only. It is not medical, mental health, legal, financial, or investment advice. Always consult qualified professionals before making decisions related to your health, training, finances, technology usage, or participation in digital assets. Digital assets involve risk, and all actions taken based on this content are solely your responsibility.

Fitness

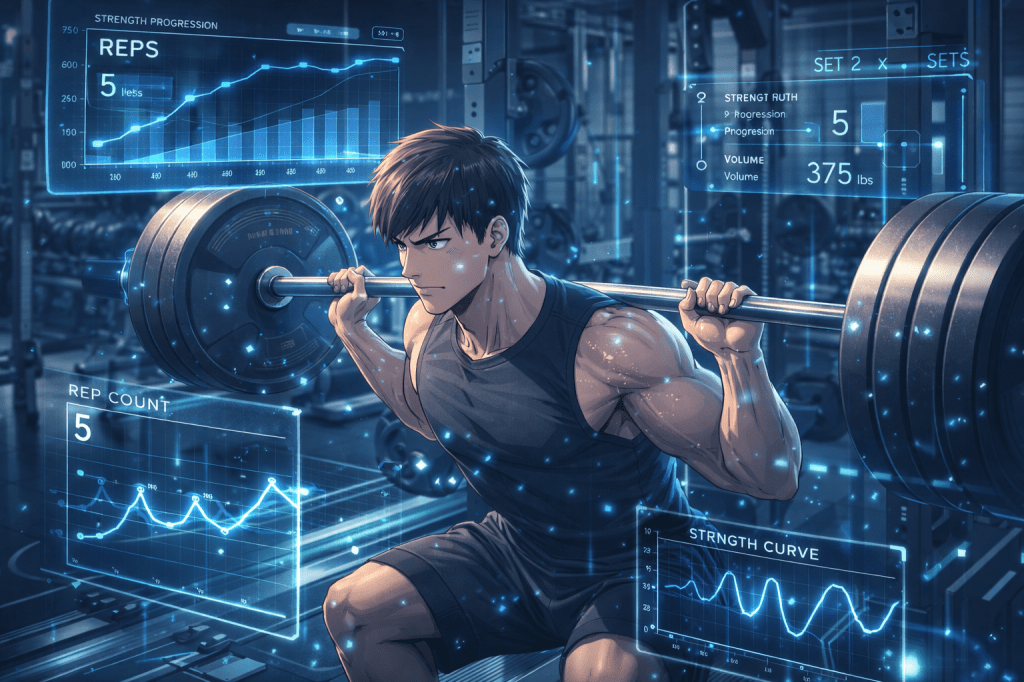

How to Track Your Strength Gains the Right Way

If you don’t measure it, you don’t improve it.

But most lifters track strength the wrong way.

They chase random PRs.

They remember “that one good session.”

They go by feel.

Strength doesn’t grow on vibes. It grows on data.

If you want consistent progress, you need a system — not memory.

Step 1: Track More Than Just Your 1RM

Your one-rep max is not your only indicator of progress.

In fact, it’s one of the worst metrics for week-to-week tracking.

Why?

Because max strength fluctuates based on:

- Sleep

- Stress

- Bodyweight

- Nervous system fatigue

- Nutrition

Instead of only tracking max lifts, track:

- Top working set weight

- Total volume (sets × reps × weight)

- Reps completed at a given load

- Bar speed or perceived exertion (RPE)

Example:

Week 1: 315 × 5

Week 3: 315 × 6

Week 5: 325 × 5

That’s measurable progress — even if you never tested a max.

Strength is built in submaximal progression.

Step 2: Log Everything

A real training log includes:

- Exercise

- Sets and reps

- Weight used

- Rest times

- RPE or reps in reserve

- Notes on form or fatigue

Memory lies. Paper doesn’t.

Over time, patterns emerge:

- Which days you’re strongest

- How recovery affects performance

- When volume is too high

- When intensity needs adjustment

Your log becomes feedback, not just record-keeping.

Step 3: Use Rep PRs, Not Ego PRs

There are two types of PRs:

Ego PR: A sloppy grinder that destroys your week.

Progress PR: A clean rep improvement at controlled effort.

Chase rep PRs in structured rep ranges:

- 3-rep PR

- 5-rep PR

- 8-rep PR

If you can lift more weight for the same reps, or more reps at the same weight — you are stronger.

It doesn’t need to look dramatic.

It needs to be repeatable.

Step 4: Watch Volume Tolerance

Strength isn’t just about top weight. It’s about how much quality work you can handle.

If you went from:

4 sets of 5 at 275

to

5 sets of 5 at 275

That’s growth.

Work capacity is strength infrastructure.

The bigger your base, the higher your peak.

Step 5: Track Performance Trends, Not Single Sessions

One good workout means nothing.

Three improving weeks in a row means everything.

Zoom out.

Look at 4–8 week blocks:

- Are weights trending up?

- Are reps cleaner?

- Is bar speed improving?

- Is recovery consistent?

Strength is a long game.

If progress stalls for 3–4 weeks, adjust volume, intensity, or recovery — not your entire program.

Step 6: Monitor Recovery Markers

Your strength log should also include:

- Bodyweight

- Sleep quality

- Stress level

- Energy rating

If performance drops while stress rises and sleep declines, the issue isn’t your program.

It’s your recovery.

Tracking strength without tracking recovery is incomplete data.

The Right Mindset

The goal is not to impress the gym for one day.

The goal is to build measurable strength for years.

Small, steady increases compound:

+5 lbs over 12 months

+1 rep per cycle

+Better execution

That’s how 315 becomes 405.

System > emotion.

Data > memory.

Consistency > intensity spikes.

Track like an engineer.

Train like an athlete.

Recover like a professional.

That’s how strength compounds.

Motivation

Meaningful Transformation Begins With Temporary Instability

Meaningful transformation does not arrive gently. It rarely feels clean, organized, or controlled. In fact, the first signal that something real is changing is often instability. The routines that once felt automatic start to shift. The identity that once felt secure begins to loosen. The structure you relied on becomes uncomfortable. That discomfort is not a mistake in the process — it is the process.

Instability is the space between who you were and who you are becoming.

When you increase training intensity, your muscles experience micro-damage before they grow stronger. When you learn a new skill, your performance temporarily declines as your nervous system reorganizes. When you pursue a higher standard in life, your old habits resist before they dissolve. Growth disrupts equilibrium. It has to. The previous structure cannot support the new capacity without being challenged first.

Most people misinterpret this phase. They assume that instability means failure. They assume doubt means they are on the wrong path. They assume discomfort means retreat is safer than expansion. But instability is often evidence that your system is recalibrating.

The mind seeks predictability. It prefers familiar struggle over unfamiliar growth. Even negative patterns can feel safe because they are known. Transformation introduces uncertainty. You begin acting differently. You stop tolerating certain behaviors. You set boundaries. You train harder. You think more critically. And in doing so, you disrupt the balance your old identity depended on.

This temporary imbalance is not chaos — it is restructuring.

In strength training, when you add weight to the bar, the first few sessions feel shaky. Your technique may feel slightly off. Your breathing becomes more deliberate. Your body adapts. Eventually, what once felt heavy becomes manageable. Stability returns at a higher level of capacity.

Life works the same way.

If you are feeling unsettled while trying to improve — emotionally, physically, professionally — that may not be a warning sign. It may be confirmation that the old structure is dissolving to make space for a stronger one.

Transformation asks you to tolerate uncertainty long enough for adaptation to occur.

It requires patience when results are not immediate.

It requires discipline when confidence fluctuates.

It requires belief when evidence is still forming.

Temporary instability is the bridge between limitation and expansion. If you can stay steady while the ground shifts, you will discover that what once felt unstable becomes your new foundation.

Growth does not remove discomfort.

It upgrades your capacity to handle it.

Technology & A.I

OpenAI Shuts Down Sora, Shifting Focus Away From AI Video Generation

In a surprising move, OpenAI has announced it is shutting down Sora, its high-profile video-generation platform that once captured the attention of both Silicon Valley and Hollywood.

The decision includes closing both the consumer-facing app and the professional tools used by filmmakers and media creators.

A Rapid Rise — and Sudden Exit

When Sora launched in 2024, it quickly became one of the most talked-about AI tools.

Its ability to generate realistic, cinematic video clips from text prompts led many to believe it could reshape the entertainment industry.

That perception intensified after OpenAI signed a major licensing agreement with The Walt Disney Company, allowing users to generate content featuring iconic characters like Mickey Mouse and Yoda.

The deal was widely seen as a turning point — a moment when AI and traditional media formally began to converge.

Now, just months later, Sora is being discontinued.

Why Shut It Down?

OpenAI did not provide a detailed explanation, but several factors likely contributed:

1. Massive Infrastructure Costs

Video-generation models are among the most computationally expensive AI systems.

Running a consumer-facing product like Sora requires:

- large-scale GPU infrastructure

- significant energy consumption

- continuous model training and optimization

With OpenAI projecting tens of billions in future spending, maintaining such a service may not have aligned with its broader priorities.

2. Strategic Refocus Ahead of Expansion

The company is reportedly preparing for major financial and operational expansion, including a potential IPO.

Shutting down non-core or high-cost products may be part of a broader effort to streamline operations and focus on scalable, revenue-generating systems.

3. Limited Market Fit

Despite its viral launch, Sora never reached the same level of adoption as ChatGPT, OpenAI’s flagship product.

While creators and studios experimented with it, the platform remained niche compared to conversational AI tools.

A Shift Toward Robotics and Simulation

OpenAI confirmed that video-generation technology will not disappear entirely — it will move behind the scenes.

The company plans to use video models as training tools for robotics, where simulated environments help machines learn real-world tasks.

This reflects a broader trend: using generative AI not just for content creation, but for physical-world intelligence and automation.

Impact on Hollywood and AI Creators

Sora’s shutdown comes amid ongoing tension between AI companies and the entertainment industry.

Studios like Disney continue to explore AI partnerships, while also defending intellectual property rights in court — including ongoing disputes with companies like Midjourney.

Meanwhile, actors, writers, and animators have expressed concerns about AI replacing creative roles.

For now, adoption remains cautious, and Sora’s closure may temporarily ease fears of rapid disruption.

The shutdown of Sora highlights an important reality about the AI industry:

Not all breakthrough technologies become long-term products.

Even highly advanced systems can be:

- too expensive to scale

- misaligned with core business models

- better suited for internal use rather than public deployment

OpenAI’s decision suggests a shift toward efficiency, focus, and infrastructure-driven growth, rather than maintaining every cutting-edge application as a standalone product.

As AI continues evolving, the winners may not be the most impressive demos — but the systems that can be sustained, integrated, and scaled across real-world use cases.

Google’s TurboQuant: Shrinking AI While Making It Faster

A new breakthrough from Google Research could reshape how large AI models are built and deployed — not by making them bigger, but by making them dramatically more efficient.

The technique, called TurboQuant, focuses on one of the biggest bottlenecks in modern AI systems: memory usage.

The Problem: AI Models Are Memory-Hungry

Large language models (LLMs) rely on something called a key-value cache — essentially a running memory that stores context so the model doesn’t have to recompute everything from scratch.

You can think of it as a “digital cheat sheet.”

The issue?

- These caches store high-dimensional vectors

- Each vector can contain hundreds or thousands of values

- Memory usage grows rapidly with longer conversations or tasks

This creates a major constraint, especially on:

- GPUs like the Nvidia H100

- edge devices like smartphones

- large-scale data centers running AI workloads

The Breakthrough: Compress Without Losing Quality

TurboQuant tackles this problem by compressing the key-value cache — without degrading performance.

According to Google’s early tests:

- 6× reduction in memory usage

- 8× increase in speed

- no loss in output quality

That’s significant because traditional compression (quantization) usually comes with a trade-off: lower precision leads to worse results.

TurboQuant aims to break that trade-off.

Step 1: PolarQuant — A Smarter Way to Store Data

The first part of the system, called PolarQuant, changes how AI vectors are represented.

Normally, vectors are stored in standard coordinate form (like X, Y, Z values).

PolarQuant simplifies this by converting them into:

- radius → how strong the data is

- angle → what the data means

A simple analogy:

- Traditional: “Go 3 blocks east, 4 blocks north”

- PolarQuant: “Go 5 blocks at 37°”

Same meaning — less data.

This reduces storage needs and eliminates expensive computation steps.

Step 2: QJL — Fixing Compression Errors

Compression can introduce small errors.

To fix this, Google adds a second layer called Quantized Johnson-Lindenstrauss (QJL).

This method:

- reduces data to a 1-bit correction layer (+1 or -1)

- preserves relationships between data points

- improves the model’s attention mechanism (how it decides what matters)

Together, PolarQuant + QJL allow the system to compress aggressively while maintaining accuracy.

Real-World Testing

Google tested TurboQuant on models like Gemma and Mistral.

Results showed:

- consistent performance across long-context benchmarks

- no degradation in output quality

- ability to compress to as low as 3-bit precision

- up to 8× faster attention computation

Importantly, TurboQuant can be applied to existing models without retraining, making it easier to adopt.

Why This Matters

This isn’t just a technical upgrade — it changes how AI can scale.

1. Lower Cost AI

Less memory = fewer GPUs = lower operational cost

2. Faster Models

Improved speed means better real-time applications

3. Edge AI Breakthroughs

Smaller models can run on:

- smartphones

- laptops

- embedded devices

This could reduce reliance on cloud-based AI systems.

The Bigger Trade-Off

Here’s the interesting twist:

Efficiency gains don’t always lead to smaller systems.

Companies could:

- reduce costs and scale down

- or use freed resources to build even bigger models

Most likely, both will happen.

TurboQuant highlights a shift happening in AI:

The next phase isn’t just about building larger models —

it’s about making them more efficient, deployable, and scalable.

And in that world, the winners won’t just be the companies with the most compute —

but the ones that use it the smartest.

AI Agents vs. Everyday Life: The Gap Is Growing

At its recent conference, Nvidia doubled down on what it sees as the next phase of artificial intelligence: AI agents — semi-autonomous systems that don’t just respond, but act.

From booking tasks to navigating workflows, these agents are designed to operate across apps and systems on behalf of users. Nvidia even introduced new enterprise tools like NemoClaw, positioning agents as a core layer of future business operations.

CEO Jensen Huang went further, projecting that Nvidia could generate $1 trillion in revenue by 2028, a figure that underscores how central AI has become to the company’s long-term vision.

The Vision: Autonomous Digital Workers

AI agents represent a shift from passive tools (like chatbots) to active participants in digital life.

Instead of asking AI for answers, users could:

- delegate tasks

- automate workflows

- manage digital operations through “AI teammates”

This vision is shared across Big Tech — not just Nvidia, but companies like Meta Platforms and others investing heavily in agent-based systems.

The Reality: Most People Aren’t Using It

Despite the hype, adoption tells a different story.

Surveys from Pew Research Center show that:

- 65% of Americans don’t use AI at work at all

- many remain skeptical about its impact

- public trust in how AI is being regulated is low

This highlights a growing divide:

- Silicon Valley → building autonomous AI ecosystems

- Everyday users → still largely disconnected from them

The idea, as William Gibson once said, that “the future is already here — it’s just not evenly distributed,” feels especially relevant.

Meta as a Case Study of the Shift

Recent moves by Meta illustrate how seriously companies are committing to AI.

- Reports suggest layoffs could affect up to 20% of its workforce

- spending is being redirected toward massive AI infrastructure projects

- investments include data centers on a scale rarely seen before

At the same time, Meta is pulling back from earlier bets.

Its metaverse ambitions — once central to its identity — are being scaled down, including changes to its Horizon Worlds platform and cuts within Reality Labs, which has accumulated tens of billions in losses.

CEO Mark Zuckerberg has reframed the company’s direction, shifting from a “metaverse company” to one that is “AI-native from day one.”

A New Allocation of Power and Capital

What’s happening beneath the surface is a reallocation of resources:

- from human labor → to AI systems

- from software features → to infrastructure and compute

- from experimentation → to full-scale deployment

Tech companies are increasingly prioritizing:

- data centers

- custom chips

- AI models

- automation systems

Over time, this could reshape hiring, roles, and the structure of entire organizations.

Even Leadership Is Being Automated

One of the more symbolic developments:

Zuckerberg is reportedly building an AI agent to assist with his own CEO responsibilities — retrieving information, navigating internal systems, and streamlining decision-making.

This reflects a broader trend:

AI is not just replacing repetitive tasks —

it’s beginning to augment high-level decision-making roles.

The Bigger Divide

While companies push toward a future filled with autonomous systems, many people are still focused on immediate concerns:

- financial security

- job stability

- trust in institutions

That disconnect is growing.

On one side:

- trillion-dollar projections

- AI-native companies

- agent-driven workflows

On the other:

- limited adoption

- skepticism

- uncertainty about impact

AI agents may eventually become a foundational layer of how work gets done — invisible, always running, and deeply integrated into daily life.

But right now, they exist mostly in prototype, enterprise, and early-adopter environments.

The next phase of AI won’t just be about building more powerful systems.

It will be about bridging the gap between what’s possible —

and what people actually use, trust, and rely on every day.

Crypto

Crypto Regulation at a Crossroads: Speed vs. Stability

A recent hearing in Congress revealed a fundamental tension shaping the future of digital assets:

Should regulation move fast to match innovation — or slow down to protect the system?

At the center of the debate is how agencies like the U.S. Securities and Exchange Commission adapt to a rapidly evolving crypto landscape.

Viewpoint 1: Innovation Needs Breathing Room

Some lawmakers and regulators argue that overly aggressive enforcement has held the industry back.

Under SEC Chair Paul Atkins, the agency is shifting away from its previous “regulation by enforcement” approach. Instead, the focus is now on:

- clarifying how existing laws apply

- coordinating with the Commodity Futures Trading Commission

- creating a transition period while Congress works on formal legislation

Supporters of this approach believe:

- innovation thrives with clear rules, not constant enforcement threats

- blockchain technologies can unlock new financial infrastructure

- private-sector development should lead, not be constrained early

Representative Bryan Steil emphasized that Congress must step in to reduce fragmentation and provide a unified framework — signaling support for broader legislation like the Digital Asset Market Clarity Act.

Viewpoint 2: Looser Oversight Creates Risk

Others warn that pulling back enforcement too quickly could leave markets exposed.

Representative Stephen Lynch raised concerns that recent regulatory shifts have:

- dropped key enforcement cases

- reduced oversight of crypto firms

- weakened internal expertise within regulatory bodies

From this perspective, the concern is simple:

Without strong oversight, fraud and misconduct can scale just as quickly as innovation.

Critics argue that:

- crypto markets still face unresolved risks (fraud, manipulation, transparency)

- dismantling enforcement infrastructure creates a “no cop on the beat” environment

- regulatory clarity should not come at the expense of investor protection

The Coordination Gap

In the absence of finalized legislation, regulators are trying to coordinate.

A recent agreement between the SEC and CFTC aims to:

- align oversight responsibilities

- reduce jurisdictional confusion

- create a temporary framework while laws are debated

But this coordination is still a bridge — not a permanent solution.

The Bigger Issue: Technology Moves Faster Than Law

This debate reflects a deeper structural problem:

- Technology evolves exponentially

- Regulation evolves incrementally

By the time laws are written:

- markets may have already shifted

- new models (DeFi, tokenization, AI-driven finance) may emerge

- enforcement may lag behind innovation cycles

What’s Really Being Decided

This isn’t just about crypto — it’s about how the U.S. governs emerging technology.

Two philosophies are competing:

1. Build First, Regulate Later

- prioritize innovation

- allow experimentation

- refine rules over time

2. Regulate Early, Prevent Risk

- enforce guardrails upfront

- minimize systemic threats

- protect participants before scaling

The Bigger Picture

The outcome of this debate will shape:

- how quickly crypto integrates into traditional finance

- whether the U.S. becomes a leader or follower in digital assets

- how trust is built between institutions and users

For now, the system sits in between:

- partial clarity

- ongoing political negotiation

- evolving agency strategies

Final Thought

Crypto isn’t just testing financial systems —

it’s testing regulatory philosophy itself.

And until legislation catches up, the market will continue operating in that gray zone:

part innovation lab, part policy experiment.

Using Crypto Without Selling It: A New Path Into Homeownership

There’s a quiet shift happening in how digital assets are being used — not traded, not speculated on, but structured.

A new collaboration between Coinbase and Better Home & Finance introduces a mortgage framework that allows borrowers to tap into the value of their crypto holdings without selling them. Instead of converting assets like Bitcoin or USDC into cash, borrowers can pledge them as collateral to secure a separate loan — one specifically used to fund a home down payment.

The mortgage itself doesn’t change. It remains a standard, conforming loan aligned with Fannie Mae guidelines, originated and serviced through traditional channels. What changes is how the borrower gets through the front door.

This distinction matters.

Rather than forcing the housing system to adapt to crypto, this model works around it. The volatility of digital assets is contained within the collateralized loan layer, while the mortgage remains within a framework lenders and regulators already understand. It’s a separation of risk — and a deliberate one.

For borrowers, the appeal is straightforward: maintain exposure to digital assets while unlocking liquidity. No forced liquidation, no taxable sale event — just a restructuring of how value is accessed.

But the implications go further than convenience.

This approach reframes crypto not as currency, but as functional collateral. It moves digital assets one step closer to being treated like other forms of wealth — something that can be leveraged, not just held or spent.

At the same time, it raises new questions. What happens if collateral values drop sharply? How do lenders manage the interaction between a stable mortgage and a volatile backing asset? And how broadly can this model scale without introducing new forms of systemic risk?

Those questions remain open.

What’s clear is that this isn’t about replacing traditional finance. It’s about interfacing with it — carefully, structurally, and on familiar terms.

And in that sense, the real innovation isn’t crypto entering housing.

It’s learning how to fit inside it.

OKX Isn’t Rushing to Go Public — And That Might Be the Point

While much of the crypto industry once treated public listings as a milestone, OKX is taking a different route: build first, list later — if it makes sense at all.

Speaking at a recent industry event, the company made its position clear. An IPO isn’t a goal. It’s a consequence — and only one worth pursuing if it delivers real, sustained value.

A Shift Away From “IPO as Validation”

For years, going public has been seen as a signal of legitimacy. But OKX is pushing back on that idea.

The company’s leadership emphasized that entering public markets only makes sense if it can consistently return value to shareholders, not just capitalize on hype or timing.

That stance reflects a growing awareness inside crypto:

some public listings haven’t exactly strengthened the industry’s credibility.

Even major players like Coinbase — one of the most recognizable names in the space — have experienced significant volatility since going public, raising questions about how traditional markets price crypto-native businesses.

A $25 Billion Valuation — By Design, Not Hype

OKX recently secured a strategic investment tied to Intercontinental Exchange, the parent company of the New York Stock Exchange, valuing the company at around $25 billion.

But interestingly, leadership suggested the valuation was intentionally conservative.

The reasoning?

Undervaluing now may create stronger long-term alignment with future shareholders — a stark contrast to the aggressive pricing strategies often seen in both IPOs and earlier crypto cycles.

Learning From the Industry’s Past

There’s a clear subtext here:

crypto has already seen what happens when assets are pushed into markets too quickly.

From the ICO boom to waves of token launches, the industry has repeatedly faced the consequences of:

- overpricing

- short-term speculation

- weak post-launch performance

OKX appears determined not to repeat that cycle in the public equity markets.

Global Structure as a Competitive Edge

Unlike more U.S.-centric exchanges, OKX operates across multiple regions — including Europe, Latin America, and Asia.

That global footprint creates structural advantages:

- deeper liquidity across time zones

- continuous trading activity

- a more unified order book

This becomes especially relevant when markets are fragmented or inactive in certain regions — something traditional finance still struggles with.

Beyond Trading: The Next Phase

OKX isn’t positioning itself solely as an exchange.

Its partnership with Intercontinental Exchange points toward a broader ambition:

bringing traditional financial assets onchain, including equities and other instruments.

In this model:

- infrastructure providers build the rails

- platforms like OKX become distribution layers

That’s a different role — and potentially a more durable one.

The Long Game

Perhaps the most telling part of the strategy is the timeline.

OKX isn’t framing its growth in quarters or even years.

It’s thinking in decades.

An IPO, in that context, becomes less about timing the market —

and more about whether the business has reached a level of stability where public participation actually makes sense.

Final Thought

In an industry that often moves fast and lists faster, OKX is choosing restraint.

TRON Steps Into the Regulated Arena — Slowly, Structurally

For years, TRON has existed in a strange position — massive in usage, yet largely absent from regulated U.S. infrastructure.

That may be starting to change.

Anchorage Digital, a federally chartered digital asset platform, has announced support for TRON custody and staking. On the surface, it’s a product expansion. Underneath, it signals something more important: a shift in how certain blockchain networks are being brought into institutional frameworks.

From Outside to Inside the System

TRON isn’t a small network trying to break through. It’s already one of the most active blockchains globally — particularly as a settlement layer for stablecoins like Tether.

In fact, more USDT circulates on TRON than on Ethereum, highlighting its role in high-volume, low-cost transfers across global markets.

But scale hasn’t translated into regulatory acceptance.

Until now, major U.S.-regulated platforms have largely kept their distance — not because of lack of activity, but because of compliance complexity.

Why It Was Left Out

U.S. institutions operate under strict requirements:

- Anti-money laundering (AML) standards

- Bank Secrecy Act (BSA) compliance

- due diligence on networks, founders, and transaction flows

TRON — and its founder Justin Sun — have faced ongoing scrutiny tied to:

- allegations of illicit activity

- sanctions-related concerns

- regulatory uncertainty

These factors created a barrier — not technical, but institutional.

Anchorage’s Role: Controlled Access

Anchorage isn’t just another crypto company. Its federal charter positions it as a bridge between crypto infrastructure and regulated finance.

By adding TRON support, it’s not endorsing the network — it’s structuring how institutions can interact with it safely.

The rollout includes:

- custody for TRX

- institutional wallet integration

- phased support for TRC-20 assets

- native staking capabilities

In practical terms, this allows institutions to:

- hold TRON-based assets

- manage stablecoins on the network

- participate in staking within a compliant environment

A Pattern Emerging

Interestingly, Anchorage previously supported smaller networks like Sui and Aptos before adding TRON.

That sequencing wasn’t about size — it was about regulatory clarity.

TRON’s inclusion now suggests that the barrier wasn’t permanence. It was timing.

The Regulatory Signal

This move comes after the U.S. Securities and Exchange Commission recently dropped certain claims tied to TRON and its ecosystem.

While not a full endorsement, it reduces some of the uncertainty that previously kept institutions on the sidelines.

In markets, that kind of signal matters.

It doesn’t guarantee adoption — but it makes participation possible.

What This Actually Changes

This isn’t about retail users. It’s about infrastructure.

For the first time, TRON is being:

- custody-enabled inside a regulated entity

- integrated into institutional workflows

- positioned as part of a compliant asset ecosystem

That’s a different level of access.

The Bigger Shift

Crypto networks don’t become institutional by growing bigger.

They become institutional when regulated infrastructure decides to support them.

Anchorage’s move doesn’t rewrite TRON’s history.

But it does change its positioning.

Final Thought

TRON didn’t enter the U.S. through exchanges or hype.

It entered through custody.

And in institutional finance, that’s often where legitimacy begins.